| Author |

Topic Topic  |

|

|

ihab17

Italy

240 Posts |

Posted - 01/22/2021 : 13:01:44 Posted - 01/22/2021 : 13:01:44

|

Hello

This is my first attempt to analyze my captured data after the last firmware update of my GMC-600+ and a complete reset.

The following settings were then changed

- Fast Estimate Time: 60

- Save Data: Every Minute

- Tube Voltage: 500 (as recommended by the tube manufacturer LND 7317)

- HV Factor left to the default value of 164

- Tube Dead Time: 50 microseconds

- Enable Dead Time: ENABLED

Data collection

- Counter attached to DC power supply while collecting data

- Part of the data were collected for more than 24 hours in my house

- The other part of the data collection were from my garage (floor -1 in a place where I suspect Radon)

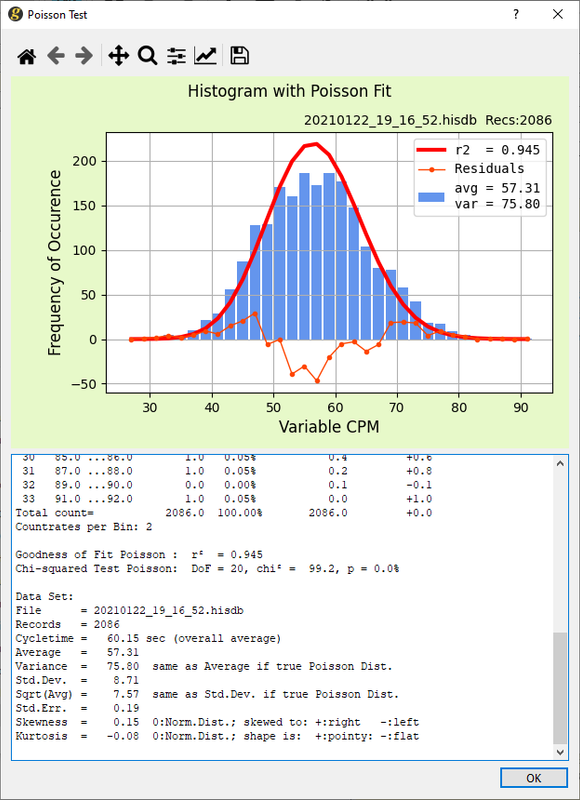

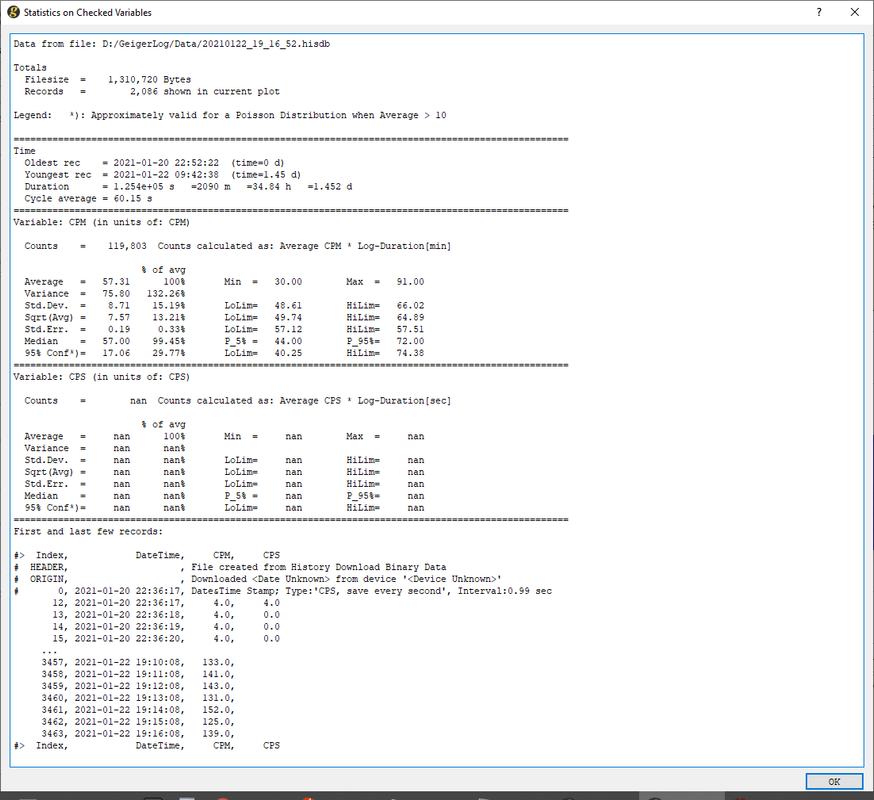

Data analyzed from the Garage at floor -1

Data analyzed from my home at first floor

As my first exercise, how do you "experts" evaluate my collected data? How do we interpret the quality of the data? How can the device be more calibrated to give better results? Are my settings correct or do you think it should be changed and why?

Thank you |

Edited by - ihab17 on 01/22/2021 13:34:28

|

|

| Reply #1

ullix

Germany

1242 Posts |

Posted - 01/24/2021 : 08:38:57 Posted - 01/24/2021 : 08:38:57

|

@Ihab17: Thanks for the detailed posting.

I will show what my standard approach of looking at counter data is, and then apply this to your data.

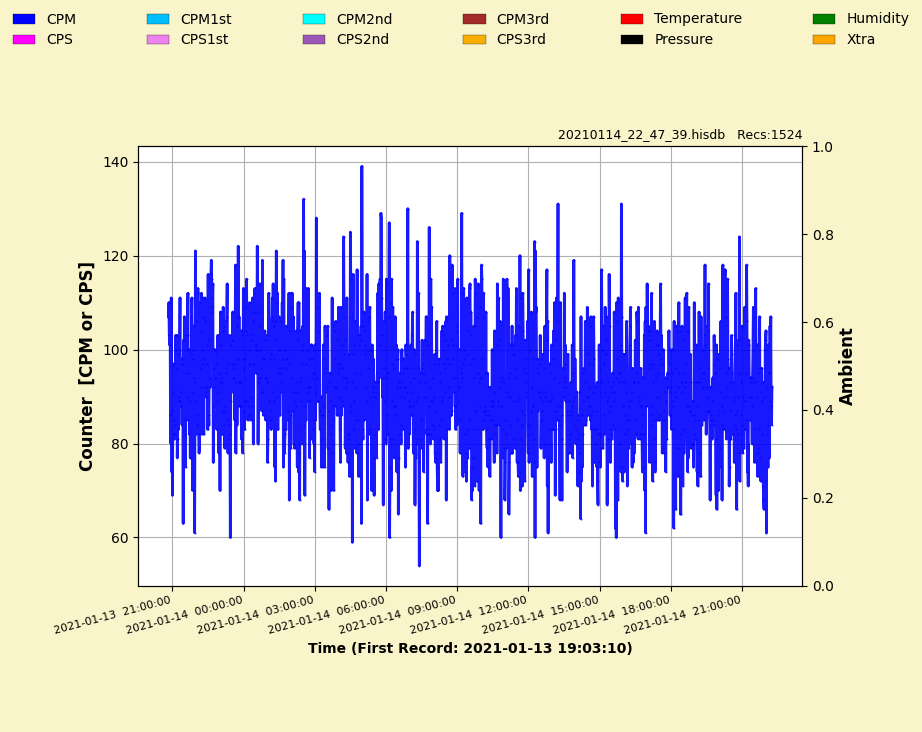

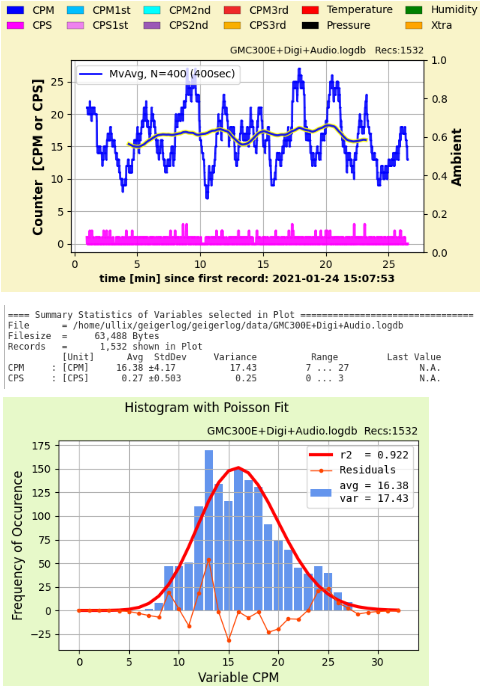

For this post I did a quick run with my GMC-300E+, measuring background in my home on a place near my desk. I stopped at about 1500 records to match roughly your data. Result here:

First look at the time course, Blue is CPM, Magenta is CPS. Your eye will get some training over time, but it is hard to make out much of this scatter. Click on Moving Average and set a time to smooth out the curve - here I used 400 sec - and you get this wavy yellow-framed-blue-line in the middle. Again, nothing spectacular; looks like the count rate is steady at around CPM= 16, so ok.

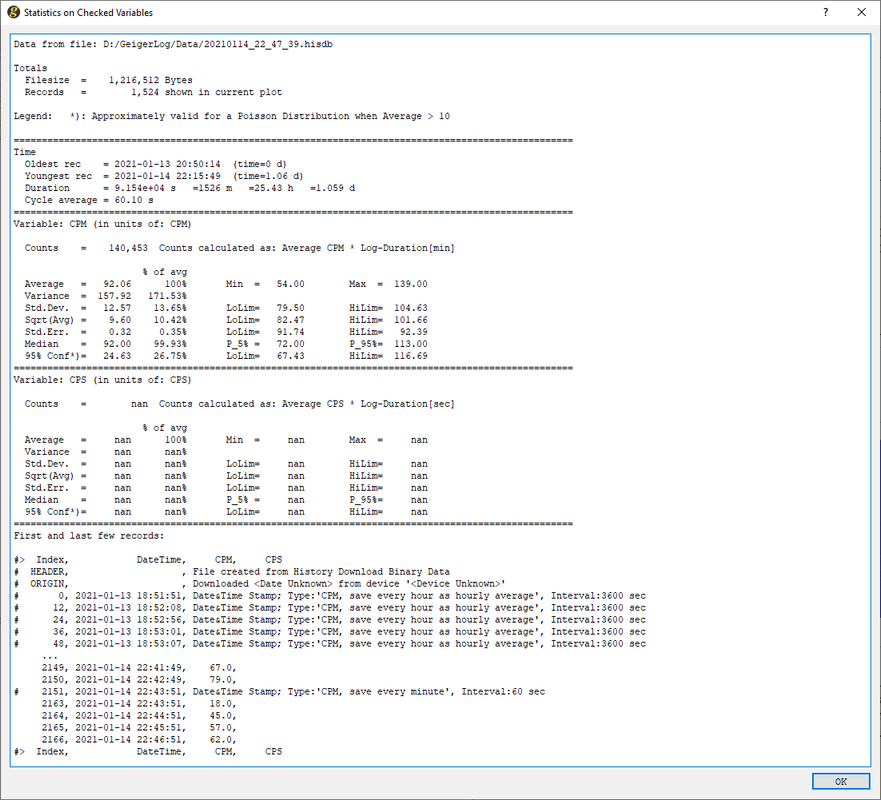

Now click the SuSt (Summary Statistics) button, and you get few statistical data into the notepad. Look at CPM: Average and Variance: for a perfect result these two should be the same, and at 16.38 and 17.43 they look ok, given the low record number. Glance at range, and 7 to 27 is within expectations. So, ok.

Check the Poisson histogram: r-squared of 0.922 is ok, Avg and Var we checked already. The histogram itself is not perfect (yet), but the thin red line at the bottom given the residuals seems to be wiggling at random. Again, so far ok.

In summary: no hint of anything problematic. To be sure, we would need longer collection times.

You noticed, I did NOT use the 'Stats' button to get the big statistics. I only look there if the outcome from the above creates some suspicion, and I want to dig deeper.

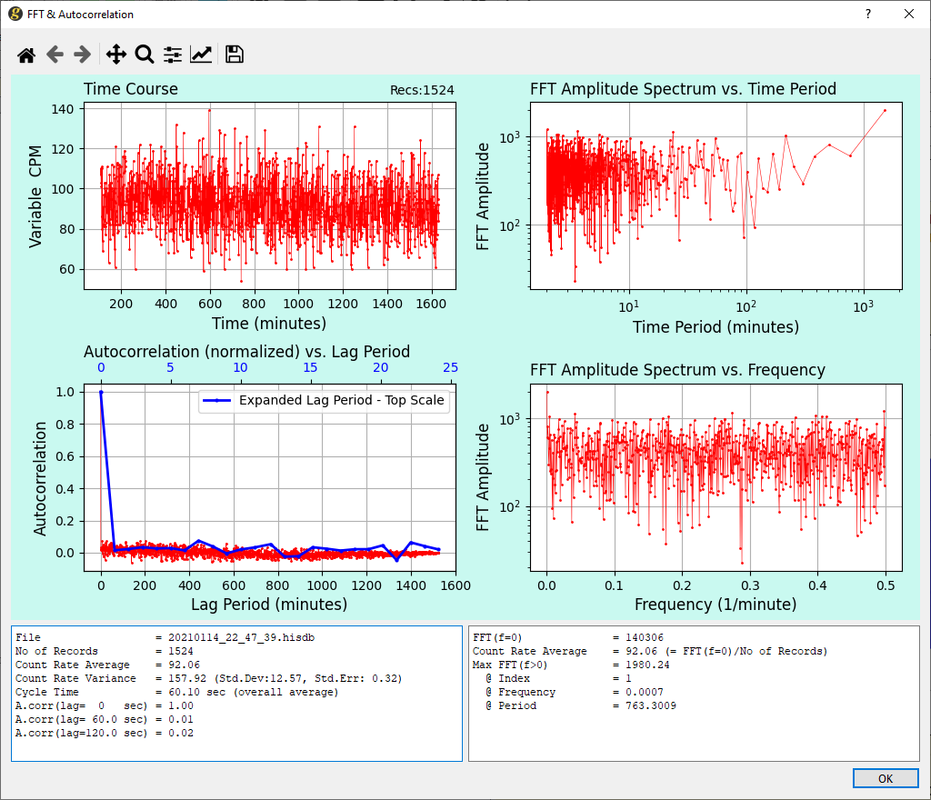

Also, I did not look at FFT. FFT will generally not be helpful when you have straight counter data, unless you see - or believe to see - a timing (caused by regular alien fly-bys ;-)), or want to check on the auto-correlation of data (see section FFT in the GeigerLog manual).

With that, let's look at your data.

Note first that my background is 16 ... 17 CPM. Since my tube is rated at 154 CPM/(uSv/h), and yours at 348, your tube would, at my location, record 348/154 = 2.26 fold higher background measured in CPM, i.e. CPM=36...38.

One should always find the place with the lowest count rate in your living quarters first, and take this as background reference.

Seems that your "home at first floor" is this place, with CPM=57.31. This is about 50% higher than I would have expected, but the natural background at your location may be different, we just don't know.

Time course looks ok (Moving average not shown, but would probably not have given anything new). Poisson with r-squared of 0.945 also looks ok, but there is a surprisingly big difference between avg 57 and var 76! The (thin-red) residual line also does not seem to wiggle at random but to indicate lack of counts in the middle, and too many counts in both left and right flanks!

It could be in need of more counts for better statistics, but this is an effect which would appear with FET not being switched off, I'd put a red flag here!

Let's shorten the "Garage at floor -1" discussion: roughly twice the count rate, and Poisson is showing the same problem of too little in the middle and too much in the flanks!

Again, with enough Goodwill it could be a statistical effect, but I am suspicious given the history of this counter!

My suggestion: repeat this, but let the recordings collect until at least 10000 records and make absolutely sure to not touching the counter during collection! To speed this up set the cycle time to 1 sec (won't do any harm to Poisson, but you'll see an autocorrelation. If not clear, I'll explain).

|

|

|

| Reply #2

ihab17

Italy

240 Posts |

Posted - 01/24/2021 : 13:03:03 Posted - 01/24/2021 : 13:03:03

|

Many thanks Ullix. Excuse my ignorance but I am learning and hopefully get to understand all the necessary parameters and switches. Here is my data downloaded from the device in hisdb format

https://drive.google.com/drive/folders/1Y1lRICXzfA6RpOBxZUG5Ge9XoqjECp55?usp=sharing

I have noticed something that isn't really a good thing! Yesterday I set the device time to exactly match my computer time, and today the clock on the device was off by more than 12 seconds! I don't know if there is a Real Time Clock on the device, but I assume it does, so since I can set the time on the device, and the device does have a Wi-Fi connection, then why don't GQ add this feature in the next firmware to add an NTP server to synchronize tome from each hour or so?

Apart from this request, and regarding the data above, I know, I shouldn't have done more than one variation. I am experimenting still. You can see from the above data that the first counts were done home for more than 12 hours with the Tube Voltage set to 500 and HV Factor left as default 164, FET 60 and Save Every Minute. Then I moved the counter to the garage for some time and got the counts with the same settings. Then I decided I won't move my counter any place until I get familiar with it and with all the settings and what impact does each setting on the accuracy of the reading and the performance of the counter. So the third readings (see image attached along with the data) were done at home where tube voltage was set to 483, HV Factor to 154, and Data Save every second. I will leave the counter like this for three days to get more accurate results, then I would repeat the readings from the same place, under the same conditions, but varying only one setting at a time and get counts for 3 days and will continue to repeat the cycle until I get more familiar with the best settings and under what conditions I should change them.

By the way, in another discussion, you mentioned buying an audio USB cable for the counter. I looked from ebay and it was 13 USD from GQ but shipping is 103 USD!!! No way! Amazon.it doesn't have anything similar. Any recommendations where to buy it from?

Thanks |

|

|

| Reply #3

ihab17

Italy

240 Posts |

Posted - 01/25/2021 : 00:27:22 Posted - 01/25/2021 : 00:27:22

|

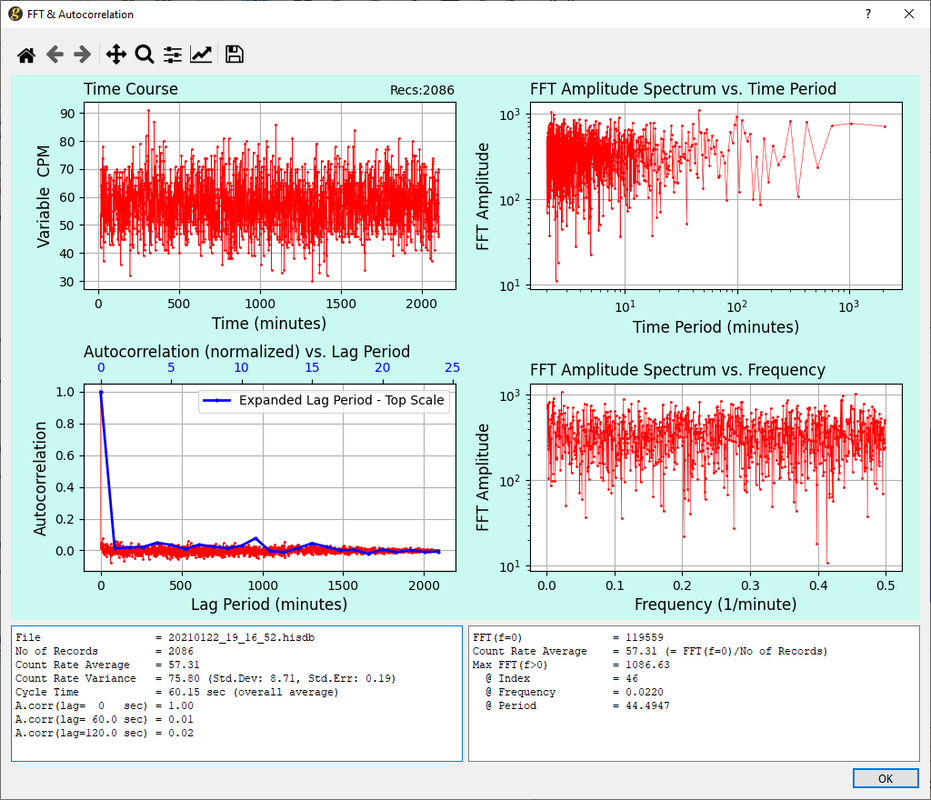

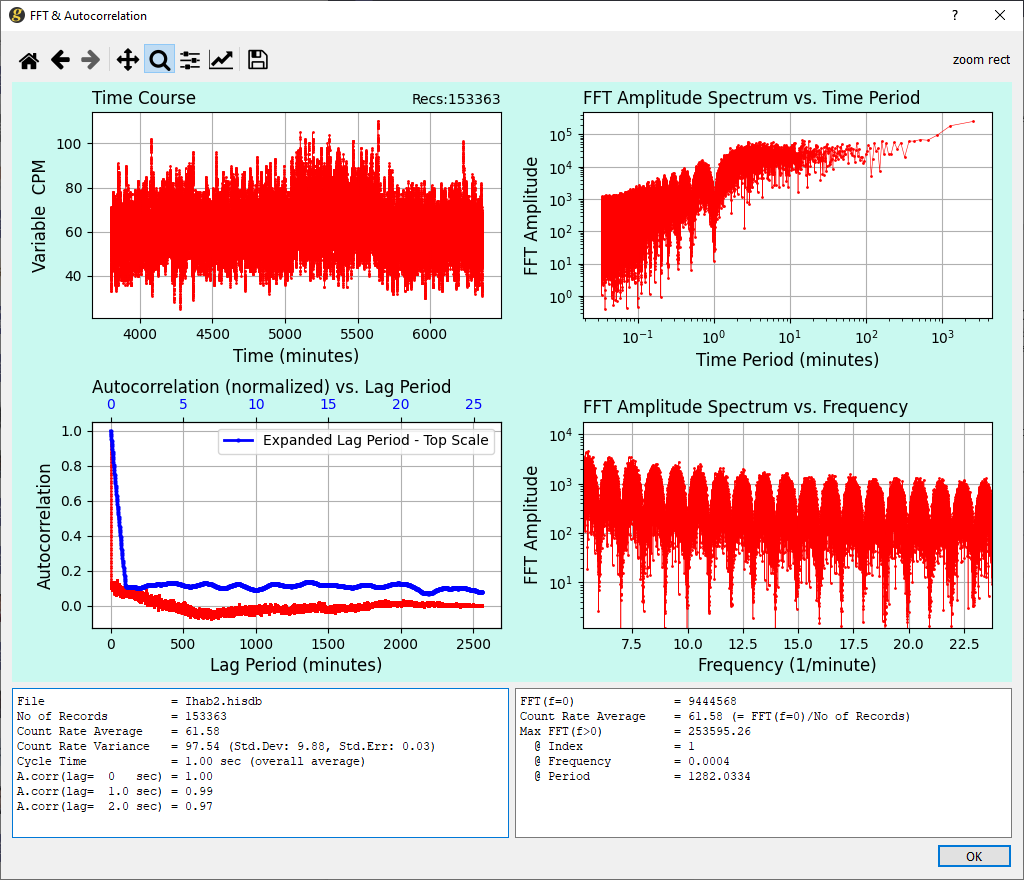

Ullix, if I zoom on the FFT Amplitude Spectrum vs. Frequency, I get something similar to this. Is this normal? What are those periodic ups and downs?

|

|

|

| Reply #4

ullix

Germany

1242 Posts |

Posted - 01/25/2021 : 01:03:11 Posted - 01/25/2021 : 01:03:11

|

What do you mean with "an audio USB cable for the counter."? If your computer does have a sound card, you only need a "standard 3.5mm Male-to-Male Stereo Audio Cable". See: https://sourceforge.net/projects/geigerlog/files/Articles/GeigerLog-AudioCounter-Support-v2.0.pdf/download

If it does not - are there really any computer out there without a sound card except for the Raspi? - then you need a sound card, either a regular one to plug into a slot of your computer, or a USB-Sound-Card, which goes into any USB plug.

From the latter type there are plenty of offers on Amazon for under 10�. Look into the GeigerLog manual in the "Raspi Installation" section. I used this one:

Worked both on Raspi as on Desktop.

|

|

|

| Reply #5

ullix

Germany

1242 Posts |

Posted - 01/25/2021 : 01:17:08 Posted - 01/25/2021 : 01:17:08

|

The periodic pattern in the FFT analysis is the consequence of oversampling, like collecting CPM data every 1 second. In mathematical terms it is the convolution of a signal with a rectangle in the time domain, which becomes this effect in the frequency domain. You also see the autocorrelation within the data up exactly 60 seconds.

It does not impact the validity of count data and its Poisson properties, as Poisson distributions are additive.

It is explained in detail in - you guessed it - the GeigerLog manual.

One request I have: please, when posting here, please make the pictures smaller. The reading is a lot easier!

|

|

|

| Reply #6

ihab17

Italy

240 Posts |

Posted - 01/25/2021 : 01:42:01 Posted - 01/25/2021 : 01:42:01

|

| OOOOOOK, printing the manual and reading it thoroughly. Time to get serious study here |

|

|

| Reply #7

ullix

Germany

1242 Posts |

Posted - 01/25/2021 : 02:53:24 Posted - 01/25/2021 : 02:53:24

|

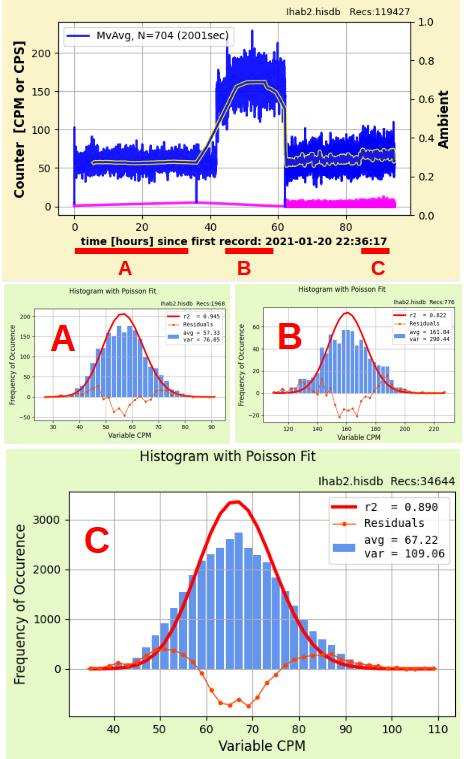

I looked into your data linked to in Reply#2.

My conclusion: something is wrong with this counter!

It looks like FET (Fast Estimate Time) is still active, although you said to had it set to 60 seconds, which would have meant it is off? Please, verify: is it really at 60sec?

Further, inspecting the file with the GeigerLog tools I see it is a history downloaded by GeigerLog from the counter's memory. So, all recording had been done by the the counter itself; GeigerLog did not touch the data during recording.

The recording was initially done in the 'CPM, save every minute' mode, and on 2021-01-23 12:39:07 changed to 'CPS, save every second'. Looks like the first part is what you presented yesterday, and the frequent saving part is new.

I see 3 main periods. Within each the count rate was not stable throughout (concluded after checking with Moving Average), so I took only the segments marked with a red bar at the bottom of the picture, labeled A, B, C, and did a Poisson on each.

What I suspected yesterday based on A and B has been clearly confirmed by C. In particular look at the thin-red residual curve at the bottom of each graph: these are not Poissonian data - there is too little in the middle, and too much in both the left and right flanks! This is reflected in var being almost twice at much as avg.

This is clearly not right.

If I had to make a guess, I'd say there is an internal FET=30 seconds active. Don't know if true, but this would result in similar distortions!

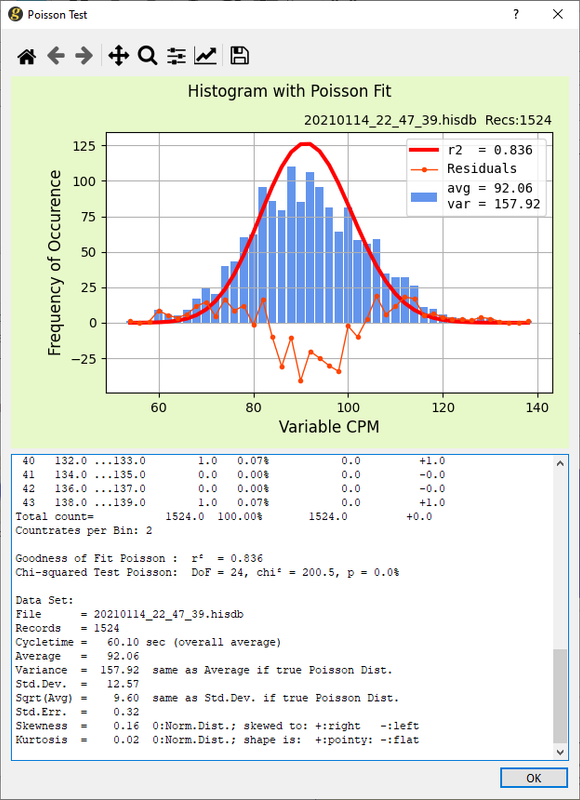

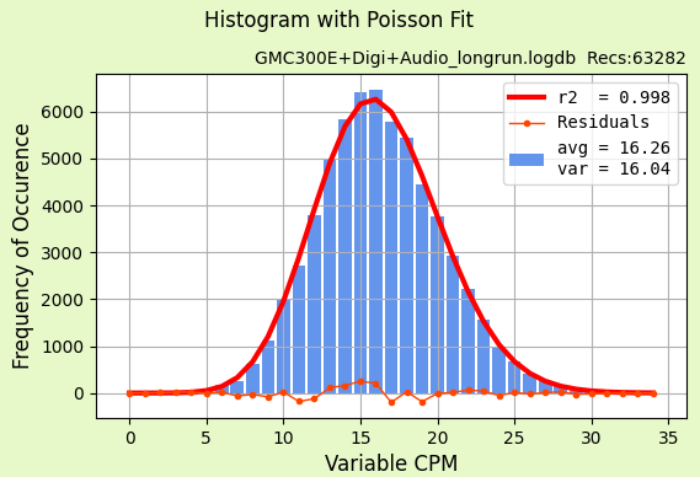

In case you wonder whether counter data can ever perform Poissonian-like, here an example using a good, old GMC-300E+ counter in an overnight run:

This has textbook-quality in all aspects!

|

|

|

| Reply #8

ihab17

Italy

240 Posts |

Posted - 01/25/2021 : 06:32:11 Posted - 01/25/2021 : 06:32:11

|

quote:

Originally posted by ullix

I looked into your data linked to in Reply#2.

FET is 60 seconds and has always been after each factory reset that I have done. It is the first parameter I change

Also YES you are right, the second part is also CPS and the data has been imported directly from the counter without GeigerLog even touching the data

|

|

|

| Reply #9

Damien68

France

780 Posts |

Posted - 01/25/2021 : 07:32:31 Posted - 01/25/2021 : 07:32:31

|

It is clear that A, B and C histogram are really beside a Poisson distribution.

At the same time this is what the 'histogram' and 'poisson reference' is for and in this case it do not certify the measurements and indicates a problem (it's also textbook-quality  ). ).

it remains to find the problem.

By comparing Histogram 'Recs Nb' with 'red underligned' phase duration, Aparently part A and B are CPM based and part C is CPS based.

So for phase C the probleme can't be around FET beacause is CPS based and so FET is not applicable. if it's CPS row data from device Flash memory this shows a problem coming from the counter (software/ hardware stability...).

I have never seen deviations so bad over 9.6 hours of test. var=109 / avg= 67 and case A anb B follow the same issue.

after that we should be sure that the counter and environement had never been moved and redo the tests to see if it repeats.

after if we are talking about radon (perhaps for case B) there may be variations in flow over time which could increase the variance. but this is not the case with case C with only normal background

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/25/2021 08:27:43 |

|

|

| Reply #10

ullix

Germany

1242 Posts |

Posted - 01/25/2021 : 08:44:11 Posted - 01/25/2021 : 08:44:11

|

It occurred to me that when setting the counter to 'CPS, save every second' then only CPS data are saved into the internal memory of the counter. Those aren't impacted by the FET setting, as we discussed it. (The possibility of something FET like for CPS seems to be too far fetched, I exclude that). The CPM data are added by GeigerLog after parsing the CPS data, and this is simply the sum of the last 60 CPS values.

So, FET is not the explanation, what then can it be?

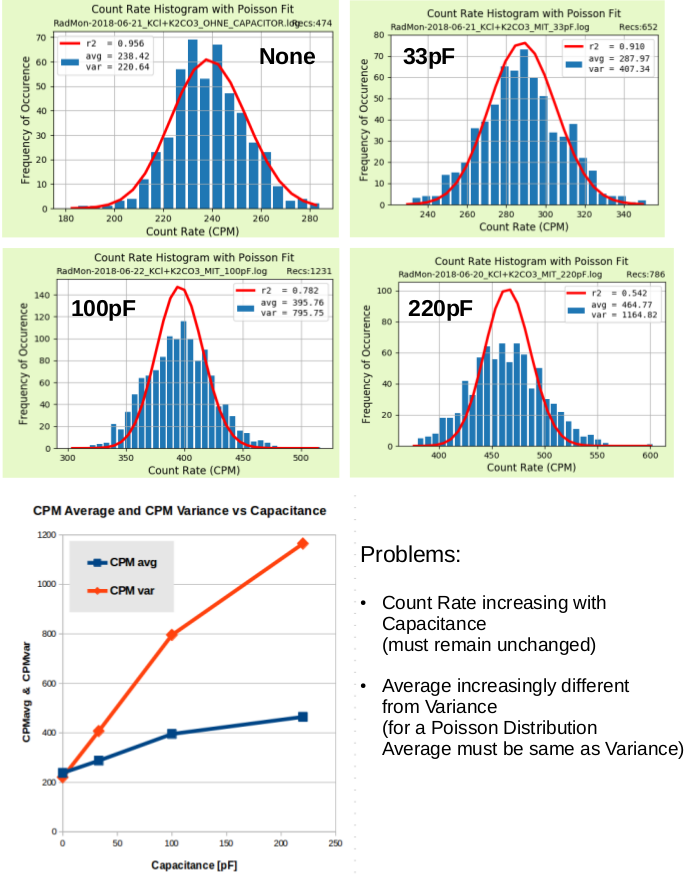

The data reminded me of some results obtained more than 2 years back when I investigated how external leads to a tube need to be designed. It turned out that the capacitance of the combined leads-anode-resistor-tube system is a very essential component.

Back then I choose to torture my 'RadMon+' Geiger counter, as this seemed to be the more robust system as opposed to an GMC-300E+ ;-). Tube was a M4011. To summarize with a final demo, I mimiced long leads by adding either None, or capacitors of 33, 100, 220pF from cathode to anode:

Clearly, with increasing capacitance Poisson becomes more and more of the shape seen here with the GMC-600+ counter, namely too little in the middle, too much in the flanks! Bad.

While Average is (approx) equal to Variance in the no-capacitance case (as it should be), it increases to 2.5 fold in the highest case! Very bad.

And the bottom graph, blue curve, shows that on top of that even the average count increases almost 2 fold over the tested range. Ugly. This definitively should not happen, the radioactive load does not increase when the counter has a fault!

Is that possibly the reason why the GMC-600+ shows a 50% higher background in Italy than expected, and not any truly higher radioactivity in the vicinity of Rome?

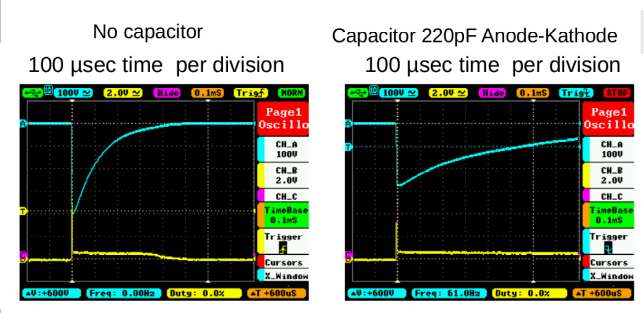

But wait, there is more. Unsurprisingly, an extra capacitance at the anode has an impact on the electrical pulse width of a count. Also data from old times:

Easy to see: without extra capacity the Pulse-Width-at-Half-Height is about 100us. While with 220pF it is about 400us, 4 times as much.

Is that perhaps the reason that a tube specified by the manufacturer at 40us dead time is configured by GQ with 330us, more than 8 times higher?

I wish someone with good electrical hands and eyes could have a look at the wiring of this device. It looks like there is a whole lot of evidence pointing to a major design flaw in the GMC-600+!

|

|

|

| Reply #11

ihab17

Italy

240 Posts |

Posted - 01/25/2021 : 09:20:02 Posted - 01/25/2021 : 09:20:02

|

Frankly speaking I'm getting more frustrated of this device and I think I have made the wrong choice as it seems no settings on the device would result in better accurate readings. I am still testing the device with several settings hoping I could get some good data, but reading these results, it looks it is a HW issue rather than a settings issue. I also lost the possibility to send back the device to the manufacturer from eBay. If all my attempts go banana, then I'm off for another GMC reader.

P.S The device is in a fixed place on the table in my living room and all readings are done from there. No moving, no shaking, no other radioactive sources close (I don't have any artificial radioactive source, only the background and ambient radiation) |

Edited by - ihab17 on 01/25/2021 09:22:04 |

|

|

| Reply #12

Damien68

France

780 Posts |

Posted - 01/25/2021 : 11:49:51 Posted - 01/25/2021 : 11:49:51

|

Electrically speaking:

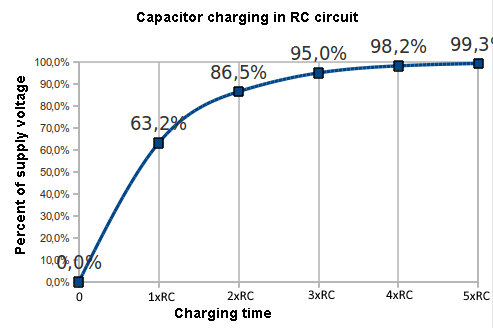

an avalanche effect causes an electric arc inside the tube which results in a short circuit between the anode and the cathode, causing the tube to discharge.

So after the tube must be recharged.

The tube has an intrinsic capacitance between its anode and its cathode specified in the datasheets (when they exist). for the SBM-20 it is 4.2pF

The tube is recharged through its anode resistor which is typically 5 - 10 M ohms.

The tube capacitance and the anode resistance therefore form an RC cell.

After decharged, the tube voltage will therefore rise again by following the following curve:

With RC = 5M ohms * 4.2 pF = 21uS

On your oscillograms, without additional capacitance the pulse is already lengthened because you have to take into account the specific input capacitance of your probe you are using and which must be added because it constitutes a load on the anode.

The input capacitance of the probes is specified on the probe datasheet.

1x probes typically have a capacitance of 40-50 pF / 1M ohms

10x probes typically have a capacitance of 10-20 pF / 10M ohms

100x probes tend to be around 5 pF / 100M ohms.

If you are using a 10x probe

RC= (4.2 pF + 20pF) * 4.7M ohms = 113 uS which corresponds quite well to your oscillogram.

Adding a capacitance of 220pF electrically speaking will increase the pulse time, but I see no reason to increase the number of detections.

The most likely explanation for me is:

The energy discharged in the tube during a detection is equal to Q = CU

C is the total capacitance between the anode and the cathode of the tube,

U is the voltage between the anode and the cathode.

If we increase C we therefore increase the energy discharged in the tube during each detection.

If the energy discharged is too high, it may create a hot spot in the tube and then automatically initiate a second discharge. Which will cause duplicates detections (a bit like an acoustic echo), on this point I am not a specialist.

If the length of the wires is increased, the cathode wire must be protected, because it is through it that the current is measured to detect the pulses, so use a coaxial and connect the cathode to the center of the cable and the anode to shielding it is also necessary to place the anode resistance on the side of the tube as close as possible to the anode.

PS: for the LND7317 LND recommends measuring the current on the anode side, which is not what is done on the GMC600 + but that will not explain the problem.

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/25/2021 14:14:52 |

|

|

| Reply #13

Damien68

France

780 Posts |

Posted - 01/25/2021 : 13:23:55 Posted - 01/25/2021 : 13:23:55

|

| If we had too low anode resistance (for example to reduce dead time), it would be second order but we could have the same problems as with too high capacitance. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/25/2021 13:24:57 |

|

|

| Reply #14

EmfDev

2403 Posts |

Posted - 01/25/2021 : 13:56:48 Posted - 01/25/2021 : 13:56:48

|

| If you want to test raw counts, you must also disable dead time, and change fast estimate to 60 seconds. Also erase previous saved data. |

|

|

| Reply #15

ihab17

Italy

240 Posts |

Posted - 01/25/2021 : 23:41:40 Posted - 01/25/2021 : 23:41:40

|

quote:

Originally posted by Damien68

If we had too low anode resistance (for example to reduce dead time), it would be second order but we could have the same problems as with too high capacitance.

Ok this is fine with me, but does that mean that I have to open the device myself and introduce/remove/change those resistance(s)?

|

|

|

| Reply #16

Damien68

France

780 Posts |

Posted - 01/26/2021 : 00:51:31 Posted - 01/26/2021 : 00:51:31

|

quote:

Originally posted by ihab17

Ok this is fine with me, but does that mean that I have to open the device myself and introduce/remove/change those resistance(s)?

no, especially not it's just a hypothesis a possibility. the problem can be something else. I don't have GMC-600 and don't know what the problem is.

You can follow what EmfDev says to see what happens.

EmfDev is GQ. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/26/2021 00:55:06 |

|

|

| Reply #17

ullix

Germany

1242 Posts |

Posted - 01/26/2021 : 02:50:26 Posted - 01/26/2021 : 02:50:26

|

quote:

If you want to test raw counts, you must also disable dead time, and change fast estimate to 60 seconds. Also erase previous saved data.

Aha.

And what exactly does this mean?

You can't "disable dead time". It is a physical phenomenon, which exists. Or is there yet another algorithm gone sour than depends on some more unexplained settings and is somehow scrubbing the data?

And to be clear: the point is not "to test raw counts". The point is to get the counts to be expected from a counter, and not any any massaged by undisclosed software!

Wouldn't it be a lot more beneficial for both users and GQ if you were explaining up front what is going on, and what changes had been made to whatever part of the firmware, and only when users find out that someting is wrong giving some fragment of information?

This GMC-600+ counter is defect. Period. Whatever is causing it, still remains to be seen.

|

|

|

| Reply #18

Damien68

France

780 Posts |

Posted - 01/26/2021 : 03:07:23 Posted - 01/26/2021 : 03:07:23

|

@65CPM dead time activated or not it shouldn't make any difference (in average ~1/10000eme), so for 5 or 6 Max CPS values....

what is certain is that scientists always certify their measurements using histograms and Poisson curves (it's within the rules of science that we learn at university).

for the moment we can't do it without knowing why, and we should be able to do it without the slightest difficulty. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/26/2021 04:47:51 |

|

|

| Reply #19

EmfDev

2403 Posts |

Posted - 01/26/2021 : 11:24:48 Posted - 01/26/2021 : 11:24:48

|

| There is option to disable dead time, and it will just give you raw counts. Dead time formula was discussed from another thread before. The CPM is straightforward data from the Geiger Counter. There is no other data manipulation technique used in CPM except Dead time and Fast Estimate. In 60 Sec fast estimate, there is no other calculation other than Dead Time if dead time is enabled. In low CPM, dead time does not affect the CPM. |

|

|

| Reply #20

ihab17

Italy

240 Posts |

Posted - 01/26/2021 : 13:21:57 Posted - 01/26/2021 : 13:21:57

|

quote:

Originally posted by EmfDev

[In low CPM, dead time does not affect the CPM.

Hi. How low is a low CPM count? |

|

|

| Reply #21

EmfDev

2403 Posts |

Posted - 01/26/2021 : 15:22:38 Posted - 01/26/2021 : 15:22:38

|

| Low CPM is less than 100. |

|

|

| Reply #22

ullix

Germany

1242 Posts |

Posted - 01/26/2021 : 23:47:24 Posted - 01/26/2021 : 23:47:24

|

| @Ihab: so, switch dead time off and repeat your measurements. |

|

|

| Reply #23

ihab17

Italy

240 Posts |

Posted - 01/27/2021 : 00:51:43 Posted - 01/27/2021 : 00:51:43

|

quote:

Originally posted by ullix

@Ihab: so, switch dead time off and repeat your measurements.

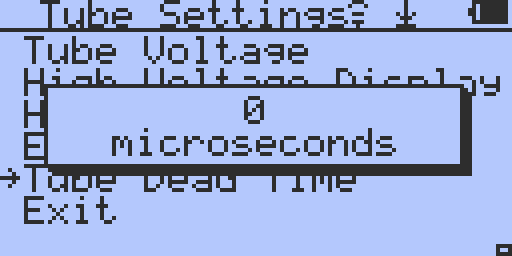

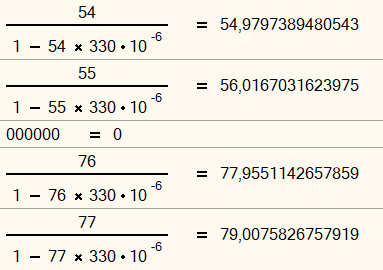

Holy cow! With dead time completely switched off (I assume setting Tube Dead Time to 0) like this

I get 0 counts even in the garage! Maybe because the radiation levels in my garage are high I get these results, I should try this from home where I have an average CPM of 70. I'll try this

|

Edited by - ihab17 on 01/27/2021 01:01:39 |

|

|

| Reply #24

Damien68

France

780 Posts |

Posted - 01/27/2021 : 01:11:31 Posted - 01/27/2021 : 01:11:31

|

this is another bug, but:

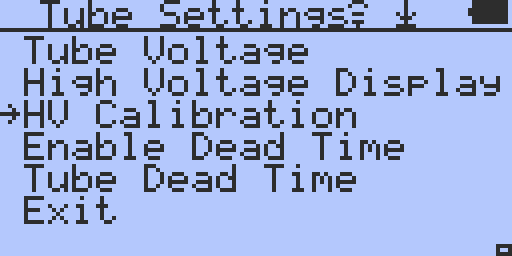

in tube setting menu just before "Tube Dead Time" option you have the option: 'Enable Dead Time", use this one to disable it.

the option name should be "Enable/Disable Dead time" or better: "Dead Time Compensation On/Off"  because Ullix is right, there is no risk of changing the laws of physics by pressing a key. because Ullix is right, there is no risk of changing the laws of physics by pressing a key. this is what we call a confusion this is what we call a confusion |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/27/2021 01:57:06 |

|

|

| Reply #25

ihab17

Italy

240 Posts |

Posted - 01/27/2021 : 01:25:07 Posted - 01/27/2021 : 01:25:07

|

quote:

Originally posted by Damien68

this is another bug

Ha ha ha ha ha, OK, got it |

|

|

| Reply #26

ullix

Germany

1242 Posts |

Posted - 01/27/2021 : 04:23:40 Posted - 01/27/2021 : 04:23:40

|

I remember that we had the dead time discussion some time ago. Eventually I gave clear instruction on how to determine this correction factor, and GeigerLog has it built in since many versions ago.

Though I had advised against using it, since there are other issues to solve than correcting the count rate when it is dangerously high. And this topic alone gives plenty of examples for things that need more urgent actions.

Nevertheless, this is the formula for dead time correction of count rate. You find it in the manual, and you find it in GeigerLog's configuration file geigerlog.cfg:

quote:

# CPM correction for deadtime:

# is defined by formula: CPM_true = CPM_obs / (1 - cpm_obs * deadtime)

Notice something? What happens, when you enter a dead time of 0 (zero)? The formula becomes

quote:

CPM_true = CPM_obs

Really not surprising, because when you have no deadtime, i.e. when it is zero, then there is never ever anything to correct.

And what was the outcome when Ihab entered a dead time of zero?

Oh, dear!

GQ: can we please see the formula as programmed into the firmware? In computer code, please. It may have been entered in the inverse ...

|

|

|

| Reply #27

Damien68

France

780 Posts |

Posted - 01/27/2021 : 06:04:45 Posted - 01/27/2021 : 06:04:45

|

multiply or devide by 1 is same thing, I wonder how they do to do this kind of bug.

can be a "sanity check" a little misplaced to "try" to avoid a division by 0? or an arbitrary one?

EDIT: on my old 500+ if I set deadtime to 0 it continu to work.

PS: a "sanity check" in C is a test done on variables before instructions to avoid falling into inappropriate situations (leading for example to divide by 0), and avoid generating errors or aberations

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/27/2021 07:07:49 |

|

|

| Reply #28

Damien68

France

780 Posts |

Posted - 01/27/2021 : 06:18:41 Posted - 01/27/2021 : 06:18:41

|

OK I made a mistake,

I can observe the issue with my old GMC-500+ It's necessary to in first activate the dead time if it not, and then only after set dead time to 0.

at this moment the led flashes on detections but the CPM of the screen decreases slowly to 0 CPM and stay at 0 CPM

(when enabling dead time if it disable, if his value is set to 0, it reset it automatically to it's default 120uS value). |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/27/2021 06:48:49 |

|

|

| Reply #29

ullix

Germany

1242 Posts |

Posted - 01/27/2021 : 08:05:32 Posted - 01/27/2021 : 08:05:32

|

@Damien: interesting observation. My old 500+ (fw 1.22) does not seem to have this feature? In what part of the menu do you find it?

|

|

|

| Reply #30

Damien68

France

780 Posts |

Posted - 01/27/2021 : 08:11:00 Posted - 01/27/2021 : 08:11:00

|

I have v2.18

Main Menu/Init SetUp/Tube Settings

"Enable Dead Time" and

"Tube1 Dead Time"

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/27/2021 08:12:30 |

|

|

| Reply #31

Damien68

France

780 Posts |

Posted - 01/27/2021 : 08:13:30 Posted - 01/27/2021 : 08:13:30

|

@Ullix,

you have 8K monitor ? |

Mastery is acquired by studying, with it everything becomes simple |

|

|

| Reply #32

ullix

Germany

1242 Posts |

Posted - 01/27/2021 : 08:14:28 Posted - 01/27/2021 : 08:14:28

|

Thanks.

Not existing. So, this now qualifies as a goof counter ;-)

Edit: sorry, a typo. But inadvertently correct: a good counter, as opposed a goofed one ... |

Edited by - ullix on 01/27/2021 08:15:44 |

|

|

| Reply #33

Damien68

France

780 Posts |

Posted - 01/27/2021 : 08:20:06 Posted - 01/27/2021 : 08:20:06

|

the CPM decreases slowly, it suggests that the compensation of the dead time must be made at the level of the CPS. in any case the deadtime = 0 alters the CPS which then alters the CPM

but with FET it's better to keep some reserves. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/27/2021 08:26:45 |

|

|

| Reply #34

Damien68

France

780 Posts |

Posted - 01/27/2021 : 09:00:34 Posted - 01/27/2021 : 09:00:34

|

It gives me beautiful histograms, very Poissonian.

I just checked it out with CPM variance very close to mean value using CPS that I got on device Flash.

also my counter must still be old enough. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/27/2021 09:41:13 |

|

|

| Reply #35

Damien68

France

780 Posts |

Posted - 01/27/2021 : 10:50:31 Posted - 01/27/2021 : 10:50:31

|

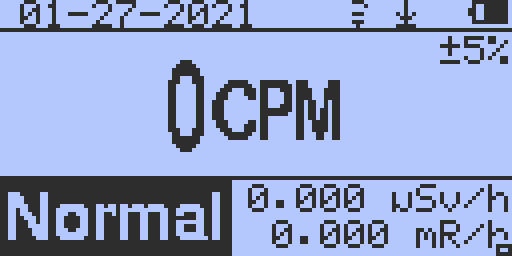

this is what I expected, the CPS recorded on the Flash falls to 0 with the condition: deadtime activated and = 0 uS

So dead time compensation is done at the CPS level and certainly taken into account in the CPS recorded.

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/27/2021 11:32:44 |

|

|

| Reply #36

EmfDev

2403 Posts |

Posted - 01/27/2021 : 11:32:16 Posted - 01/27/2021 : 11:32:16

|

| There is an option to enable to disable dead time and dead time should not be 0. |

|

|

| Reply #37

WigglePig

31 Posts |

Posted - 01/28/2021 : 00:37:18 Posted - 01/28/2021 : 00:37:18

|

quote:

Originally posted by Damien68

@Ullix,

you have 8K monitor ?

I do though and I have helped Ullix a little with testing for behaviour with scaling and such displays. |

|

|

| Reply #38

Damien68

France

780 Posts |

Posted - 01/28/2021 : 00:54:13 Posted - 01/28/2021 : 00:54:13

|

quote:

Originally posted by WigglePig

quote:

Originally posted by Damien68

@Ullix,

you have 8K monitor ?

I do though and I have helped Ullix a little with testing for behaviour with scaling and such displays.

OK that must be pretty cool,

is it better than 2 monitors? |

Mastery is acquired by studying, with it everything becomes simple |

|

|

| Reply #39

ullix

Germany

1242 Posts |

Posted - 01/28/2021 : 01:37:35 Posted - 01/28/2021 : 01:37:35

|

@Damien: when you make a factory reset, what is the default setting of deadtime on your 500+ counter? We know from @Ihab it is 330us on a 600+ counter.

@EmfDev:

quote:

There is an option to enable to disable dead time and dead time should not be 0.

Don't you see the problem? Again the formula:

quote:

CPS_true = CPS_obs / (1 - CPS_obs * deadtime)

If this formula were used, it would not matter to enter 0 for the deadtime. It would simply mean that (CPS_obs * deadtime) becomes zero, thus the denominator becomes 1, and thus the observed and corrected numbers become the same.

But since we have seen that it DOES indeed matter - because CPS_true became zero - this cannot be the formula that was implemented. It is that simple. And since you did implement a separate setting to switch off deadtime correction, you have realized that something is wrong but drawn the wrong conclusion!

You said the formula you used came from another topic here is the forum, so it cannot be proprietary information. Could you please let us know what this formula is?

The only numerical problem in this formula comes up when the denominator becomes zero. This happens when CPS_obs = 1 / deadtime. With a deadtime of 330us this is CPS=1/(3.3E-4)=3030. This is a high number, but the LND7317 should be able to handle almost 10x as much. Why this castration?

|

|

|

| Reply #40

ullix

Germany

1242 Posts |

Posted - 01/28/2021 : 01:50:59 Posted - 01/28/2021 : 01:50:59

|

@Damien: your pic is Reply#35 is quite convincing. I would like to do some reverse-engineering of this odd deadtime correction function. But my counter doesn't do that and I need help.

What numbers go into the deadtime field: can you only select from a list, or can you freely enter numbers? Is it integer only from 0 to 65535, or can you also enter numbers with decimal point?

If you could run some tests, I am highly interested. Perfect would be to remove the tube and use a pulse generator to feed pulses to the cathode end. If not possible, use the strongest sources you have, and get decent CPMs for deadtime 330 mikroseconds, 3000(yes!), 30, 3, 1, 0.3, 0.03, and show CPM vs deadtime?

|

|

|

| Reply #41

Damien68

France

780 Posts |

Posted - 01/28/2021 : 02:29:03 Posted - 01/28/2021 : 02:29:03

|

@ullix, after factory reset DT is 120uS

we can increment or decrement this value by 1 uS step.

I don't have high energy source but I have a Siglent SDG1025 generator. I already plugged it into the cathode after remove the tube, I know what you need, I'll get this out.

with DT= disable, 50uS 100uS 200uS 400uS

and for example pulses frequency = 1, 10,100;1000,10000,20000

each measurement should take only a few seconds or few minutes, depending on what will happen.

I can also test large pulses impactes.

|

Mastery is acquired by studying, with it everything becomes simple |

|

|

| Reply #42

Damien68

France

780 Posts |

Posted - 01/28/2021 : 06:21:38 Posted - 01/28/2021 : 06:21:38

|

quote:

Originally posted by ullix

@Damien: your pic is Reply#35 is quite convincing. I would like to do some reverse-engineering of this odd deadtime correction function. But my counter doesn't do that and I need help.

What numbers go into the deadtime field: can you only select from a list, or can you freely enter numbers? Is it integer only from 0 to 65535, or can you also enter numbers with decimal point?

If you could run some tests, I am highly interested. Perfect would be to remove the tube and use a pulse generator to feed pulses to the cathode end. If not possible, use the strongest sources you have, and get decent CPMs for deadtime 330 mikroseconds, 3000(yes!), 30, 3, 1, 0.3, 0.03, and show CPM vs deadtime?

Sorry for your value I just see it, this is some results, let me know if you want anothers ones

GMC-500+ v 2.18 in text mode

+3V/ 0v, 50uS �pulses with� or 25uS �pulses with� for 20 kHz test between tube cathode clip and Battery ground. GM tube1 is disposed. GM tube2 is disabled.

All data is : displayed data as CPM values after 60s for stabilisation

Note : each time, the CPS data stored in device Flash is exactly the stabilised CPM value displayed / 60 .

1. Dead time disable :

10Hz pulses : 600 CPM -> x1

100Hz: 6 000 -> x1

1kHz: 60 000 -> x1

10kHz: 600 000 -> x1

20kHz: 1 200 000 -> x1

----------------------------------------------------------------

2. Dead time = 60uS:

10Hz: 600 -> x1

100Hz: 6 003 -> x1.0005

1kHz: 63 780 -> x1.063

2kHz: 136 320 -> x1.136

3kHz: 219 480 -> x1.22

4kHz: 315 750 -> x1.32

5kHz: 428 520 -> x1.43

6kHz: 562 470 -> x1.56

10kHz: 1 499 970 -> x2.50

----------------------------------------------------------------

3. Dead time = 120uS (default) :

10Hz: 600 -> x1

100Hz: 6 060 -> x1.01

1kHz: 68 160 -> x1.14

2kHz: 157 860 -> x1.32

3kHz : 281 220 -> x1.56

4kHz : 461 520 -> x1.92

5kHz : 750 000 -> x2.5

6kHz : 1 390 530 -> x3.86

10kHz : ----- displayed value is troncated but CPS in flash is 52 059 121 (it is CPS value not CPM) Note : normaly impossible with 120 uS DT -> x5206

----------------------------------------------------------------

4. Dead time = 240uS:

10Hz: 600 -> x1

100Hz: 6 120 -> x1.02

1kHz: 78 900 -> x1.32

2kHz: 230 730 -> x1.92

3kHz: 642 840 -> x3.57

4kHz: 6 000 000 -> x25

5kHz: displayed value is troncated |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 07:52:47 |

|

|

| Reply #43

Damien68

France

780 Posts |

Posted - 01/28/2021 : 07:14:14 Posted - 01/28/2021 : 07:14:14

|

| what should be done is a similar test with counters which cannot count correctly, we will already see immediately if the CPS are constant or not and see if there is a big jitter in the "one second" time based Interupt subroutine execution. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 11:24:17 |

|

|

| Reply #44

Damien68

France

780 Posts |

Posted - 01/28/2021 : 08:20:58 Posted - 01/28/2021 : 08:20:58

|

I have another datas:

5. Dead time = 330uS:

10Hz: 600 -> x1

50Hz: 3000 -> x1

52Hz: 3120 -> x1

54Hz: 3240 -> x1

55Hz: 3360 -> x1.0182 (+60 Hits/min <=> +1 Hit/seconds)

56Hz: 3420 -> x1.0179 (+60 Hits/min <=> +1 Hit/seconds)

60Hz: 3660 -> x1.0167 (+60 Hits/min <=> +1 Hit/seconds)

70Hz: 4260 -> x1.0143 (+60 Hits/min <=> +1 Hit/seconds)

75Hz: 4560 -> x1.0133 (+60 Hits/min <=> +1 Hit/seconds)

76Hz: 4620 -> x1.0132 (+60 Hits/min <=> +1 Hit/seconds)

77Hz: 4740 -> x1.0260 (+120 Hits/min <=> +2 Hit/seconds)

80Hz: 4920 -> x1.0250 (+120 Hits/min <=> +2 Hit/seconds)

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 08:54:39 |

|

|

| Reply #45

Damien68

France

780 Posts |

Posted - 01/28/2021 : 08:37:51 Posted - 01/28/2021 : 08:37:51

|

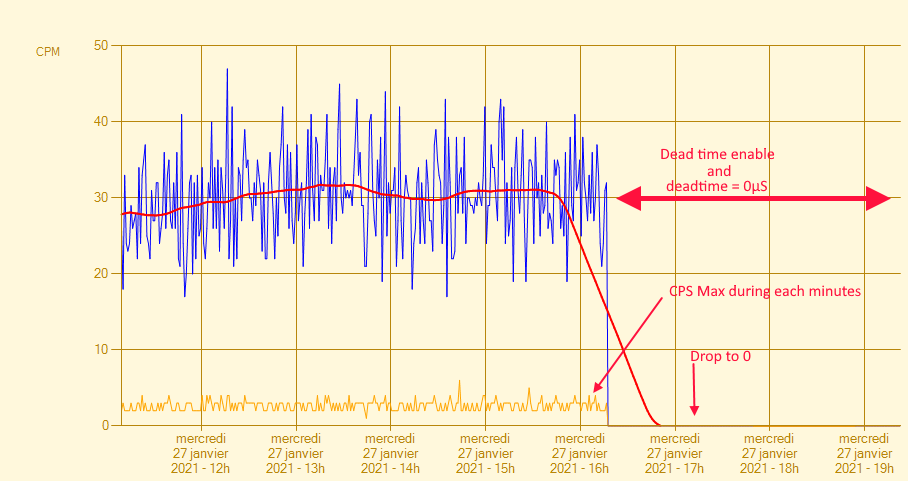

So dead time compensation at 330uS make at CPS level is equivalent for the firsts value to this substitution table:

1 -> 1

2 -> 2

3 -> 3

.

.

.

54 -> 54 //+0

55 -> 56 //+1

56 -> 57 //+1

.

.

.

76 -> 77 //+1

77 -> 79 //+2

78 -> 80 //+2

.

.

.

So 55 and 78 are a impossible value for CPS dead time compensation output because output jump directly from 54 CPS to 56 CPS and from 77 CPS to 79 CPS.

can be a issue if we want to draw histogram at CPS level.

EDIT: This is CPS not CPM so unreachable at normal background level. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 10:06:31 |

|

|

| Reply #46

Damien68

France

780 Posts |

Posted - 01/28/2021 : 09:06:49 Posted - 01/28/2021 : 09:06:49

|

But Formula is good:

for 55 raw CPS, after Dead time compensation at 330uS, with Ullix equation the result is indeed 56 CPS, and is 79 for 77 CPS, and is what we have with the GMC-500+.

quote:

CPS_true = CPS_obs / (1 - CPS_obs * deadtime)

So:

54 -> 54

55 -> 56

76 -> 77

77 -> 79

is same like GMC-500+ DT compensation

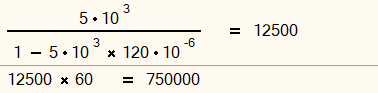

EDIT:

I do another calcul with previous data:

@DT=120uS

f= 5kHz (CPS raw= 5000)

with equation: we find real CPS = 12500 <=> 750 000 CPM

It is exactly what GMC-500+ return |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/29/2021 01:30:34 |

|

|

| Reply #47

Damien68

France

780 Posts |

Posted - 01/28/2021 : 09:25:48 Posted - 01/28/2021 : 09:25:48

|

but we speak about CPS and not about CPM so not reachable for normal background levels.

55 CPS is equivalent to 3300 CPM

the problem is elsewhere |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 09:54:11 |

|

|

| Reply #48

EmfDev

2403 Posts |

Posted - 01/28/2021 : 11:10:11 Posted - 01/28/2021 : 11:10:11

|

CPS_true = CPS_obs / (1 - CPS_obs * deadtime)

This formula was used as discussed in other thread if deadtime is enabled. If the CPS_obs is too high and the denominator becomes negative, it will give wrong reading. So there is a check that uses deadtime as denominator. So if the dead time is 0, it seemed like it caused the reading to become 0. Deadtime is not supposed to be 0 and there is a menu to disable it in just 2 clicks. |

|

|

| Reply #49

ihab17

Italy

240 Posts |

Posted - 01/28/2021 : 12:52:00 Posted - 01/28/2021 : 12:52:00

|

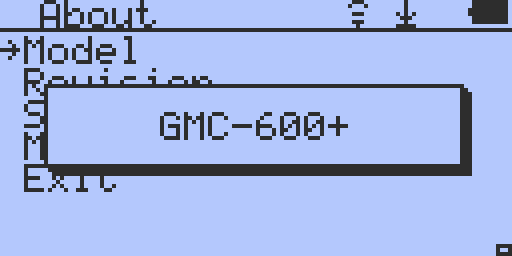

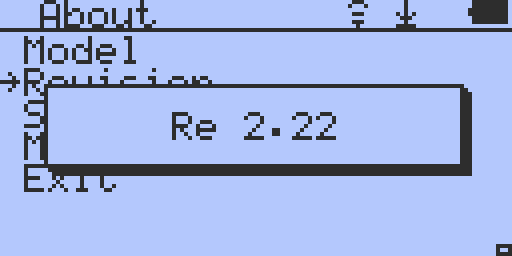

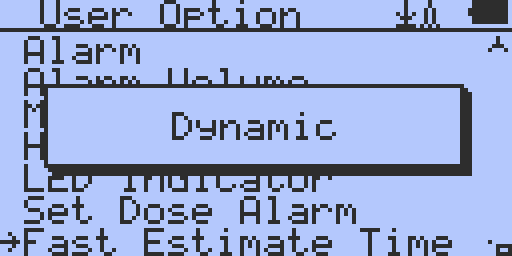

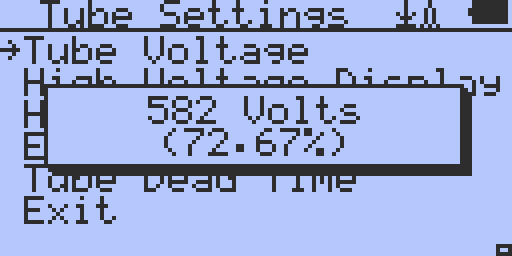

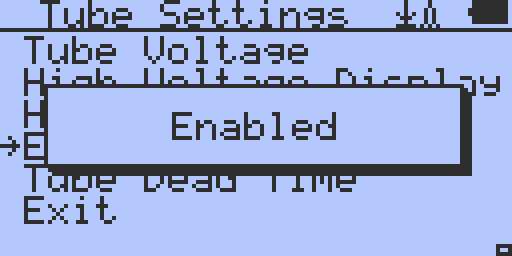

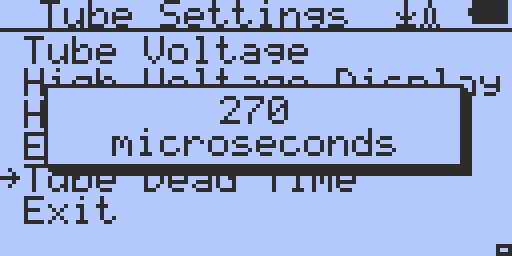

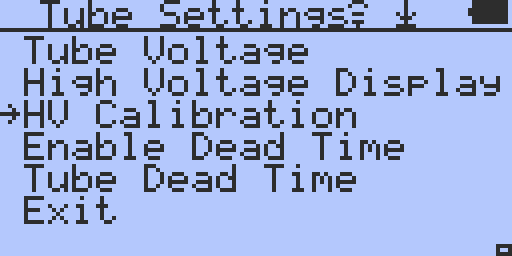

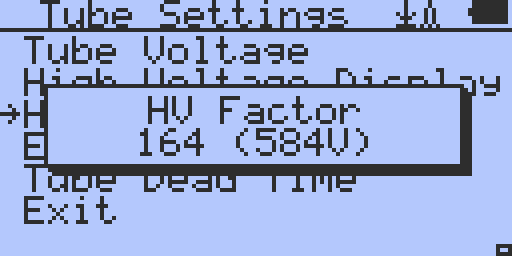

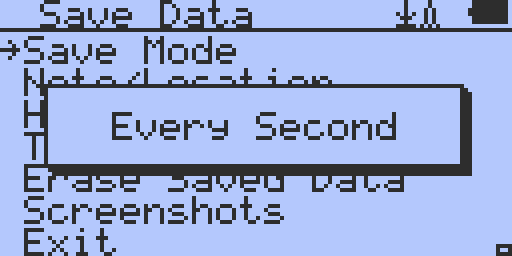

The Following images are the factory defaults on GMC-600+, I just did it for the sake of posting them

Model

Firmware Version

Fast Estimate Time default setting. Values can be set to: Dynamic, 05, 10, 15, 20, 30, or 60 seconds

Tube Voltage default setting

Enable Dead Time default setting. Values can be either ENABLED or DISABLED

Tube Dead Time default setting. Values can be from 0 to 500 microseconds. The factory default is 270 microseconds

Under HV Calibration from the Tube Settings, you will get the next image on HV Factor

HV Factor default setting. This can be changed from values ranging from 000 (9V) to 255 (898V)

Data Save Mode default setting

|

Edited by - ihab17 on 01/28/2021 13:47:07 |

|

|

| Reply #50

Damien68

France

780 Posts |

Posted - 01/28/2021 : 13:28:29 Posted - 01/28/2021 : 13:28:29

|

What is HV Factor?

this parameter does not smell very good nobody knows what it is exactly.

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 13:34:34 |

|

|

| Reply #51

ihab17

Italy

240 Posts |

Posted - 01/28/2021 : 13:43:09 Posted - 01/28/2021 : 13:43:09

|

quote:

Originally posted by Damien68

What is HV Factor?

this parameter does not smell very good nobody knows what it is exactly.

It is under HV Calibration.

Could be High Voltage Calibration Factor? This can be changed from values ranging from 000 (9V) to 255 (898V). I will add this to my above reply |

Edited by - ihab17 on 01/28/2021 13:47:24 |

|

|

| Reply #52

Damien68

France

780 Posts |

Posted - 01/28/2021 : 13:48:17 Posted - 01/28/2021 : 13:48:17

|

OK I am also thinking of High voltage calibration factor, but we don't know what it's for. and even less how to set it.

There is a tube voltage parameter, we can guess what it's for,

The HV calibration parameter ???? |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 13:50:06 |

|

|

| Reply #53

Damien68

France

780 Posts |

Posted - 01/28/2021 : 13:58:16 Posted - 01/28/2021 : 13:58:16

|

to think again, it may be the calibration of the internal voltmeter which is used to read the high voltage in text mode???

there are chances, in addition it is just below the High Voltage display item |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 14:04:53 |

|

|

| Reply #54

EmfDev

2403 Posts |

Posted - 01/28/2021 : 14:02:10 Posted - 01/28/2021 : 14:02:10

|

| The HV Calibration is for adjusting the counter s High Voltage reading (compared to real high voltage reading between tube terminals). |

|

|

| Reply #55

ihab17

Italy

240 Posts |

Posted - 01/28/2021 : 14:04:14 Posted - 01/28/2021 : 14:04:14

|

quote:

Originally posted by Damien68

OK I am also thinking of High voltage calibration factor, but we don't know what it's for. and even less how to set it.

There is a tube voltage parameter, we can guess what it's for,

The HV calibration parameter ????

I don't really know. They seem redundant. If I change the HV Factor to 000 (9V) for example, and then switch to TEXT MODE, I can see the tube voltage drops to around 9 Volts!! The tube voltage recommended by LND for this model 7317 is 500 volts, so I set the tube voltage according to this recommendation and the HV Calibration automatically gets to 140 (500V). Those two settings seem to be related |

|

|

| Reply #56

Damien68

France

780 Posts |

Posted - 01/28/2021 : 14:29:55 Posted - 01/28/2021 : 14:29:55

|

quote:

Originally posted by ihab17

quote:

Originally posted by Damien68

OK I am also thinking of High voltage calibration factor, but we don't know what it's for. and even less how to set it.

There is a tube voltage parameter, we can guess what it's for,

The HV calibration parameter ????

I don't really know. They seem redundant. If I change the HV Factor to 000 (9V) for example, and then switch to TEXT MODE, I can see the tube voltage drops to around 9 Volts!! The tube voltage recommended by LND for this model 7317 is 500 volts, so I set the tube voltage according to this recommendation and the HV Calibration automatically gets to 140 (500V). Those two settings seem to be related

HV calibration is the setting to calibrate the internal voltmeter which is used to measure the voltage of the tube and to display it.

in fact it not change the voltage of the tube at all but change just the measurement made and displayed.

by raising the voltage to 500v with the HV voltage setting, the software must certainly check and concider that the HV calibration parameter is too low and set it by default to a minimum value.

the best is to keep the default value (do factory reset)

To adjust this parameter it is necessary to measure the real voltage supplied by the HV generator, but it is very delicate to do, there are threads that deal with the subject, It need resistance of 1 Giga ohms and equipment.

This parameter should be set at the factory and stored on read only memory beside serial number and all.

the best would also be to keep the HV voltage setting by default (factory reset), in fact you cannot know what the real voltage of the tube is until you have calibrated the internal voltmeter.

depending on the components used, the voltmeter error can range from 1% to 50% (depending on the precision of the 1Gohms resistors welded into the voltmeter to measure voltage)

after calibration is OK but without, it means nothing. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/28/2021 14:44:50 |

|

|

| Reply #57

EmfDev

2403 Posts |

Posted - 01/28/2021 : 14:35:23 Posted - 01/28/2021 : 14:35:23

|

| Thank you Damien68 for explaining. The HV calibration does not change the real tube voltage. It is best to leave it to the default value unless you can measure the real voltage between the terminals as Damien68 mentioned. |

|

|

| Reply #58

WigglePig

31 Posts |

Posted - 01/29/2021 : 02:15:48 Posted - 01/29/2021 : 02:15:48

|

quote:

OK that must be pretty cool,

is it better than 2 monitors?

Actually it isn't cool at all, noone needs an 8k display and it is all just too much. This display is in a laptop (!) and is rediculous really. I would suggest avoiding them. |

|

|

| Reply #59

Damien68

France

780 Posts |

Posted - 01/29/2021 : 02:51:50 Posted - 01/29/2021 : 02:51:50

|

quote:

Originally posted by WigglePig

quote:

OK that must be pretty cool,

is it better than 2 monitors?

Actually it isn't cool at all, noone needs an 8k display and it is all just too much. This display is in a laptop (!) and is rediculous really. I would suggest avoiding them.

Thanks for your remark WigglePig, I have always been intrigued by these monitors but I will stay on my 2 FHD monitors. but sometimes I would like to push the edges but it will stay like that. but it will stay like that. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/29/2021 04:09:32 |

|

|

| Reply #60

Damien68

France

780 Posts |

Posted - 01/29/2021 : 02:54:05 Posted - 01/29/2021 : 02:54:05

|

quote:

Originally posted by EmfDev

CPS_true = CPS_obs / (1 - CPS_obs * deadtime)

This formula was used as discussed in other thread if deadtime is enabled. If the CPS_obs is too high and the denominator becomes negative, it will give wrong reading. So there is a check that uses deadtime as denominator. So if the dead time is 0, it seemed like it caused the reading to become 0. Deadtime is not supposed to be 0 and there is a menu to disable it in just 2 clicks.

the sanity check can be optimised like this:

If ((deadtime ==0) || (!DeadtimeEnable)) return(cps_obs) ;

If (cps_obs >= 1 000 000/deadtime ) return (MAXVALUE) ; // with deadtime in micro secondes

Note: 1 000 000/deadtime can be a registered value one time calculated.

but your check is OK too. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/29/2021 04:03:08 |

|

|

| Reply #61

ullix

Germany

1242 Posts |

Posted - 01/29/2021 : 04:26:23 Posted - 01/29/2021 : 04:26:23

|

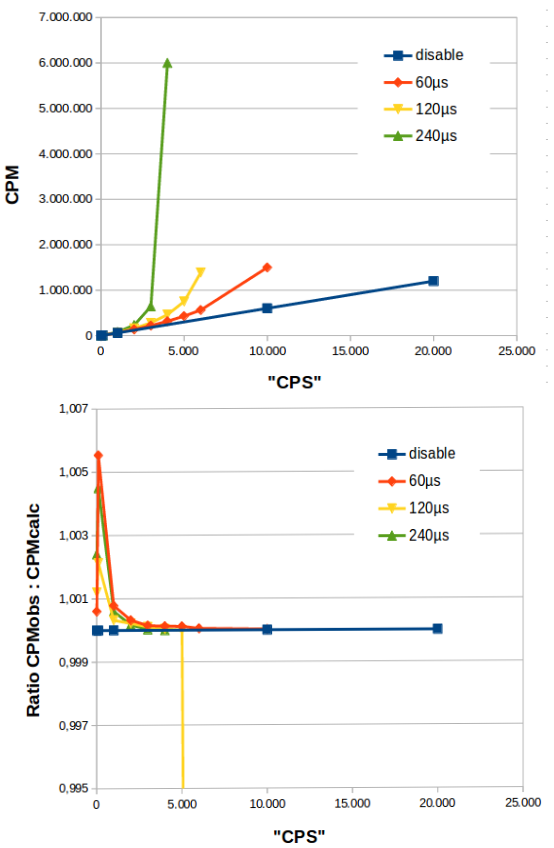

The last day has brought some significant insight, mainly by someone not only owning a signal but also knowing how to use it! Damien has also drawn the right conclusions. I am more graphic oriented, to here is my assessment:

Upper picture shows the raw data. CPS is in quotes, because these are pulses generated by the signal generator. However, the counter thought they were coming from a tube, which had pulse lengths as indicated, and corrected these "CPS" to the CPM values on the y-axis.

For the green curve the "CPS" was 4000, or 1 pulse every 250us. The deadtime was set to 240us, and so the denominator of the correction formula gets close to zero and thus the CPM values go to infinity. CPS and deadtime were not as close as in the green case, but they also are about to "explode".

In principle this is the correct behavior. But I wanted to make sure the correct formula is applied, and so in the lower graph I show the ratio of my correct formula to those from the counter, and - lo and behold - they are the same! (Within 0.5% measurement errors).

Conclusion: the deadtime formula is implemented correctly in the counter (GMC-500+ here).

But since this formula quasi switches itself off when you enter a deadtime of zero, and let the shown CPM be exactly the recorded ones, why then did the counter fail and instead switches the counts off?

EmfDev:

quote:

If the CPS_obs is too high and the denominator becomes negative, it will give wrong reading. So there is a check that uses deadtime as denominator. So if the dead time is 0, it seemed like it caused the reading to become 0.

This is contradiction to the assumptions underlying the correction formula. Once your count rate is so high, that each count pulse follows the previous without a gap between them, there can not be any higher count rate! This becomes a bit more complicated in a Poisson governed pulse creation as opposed to pulses from a signal generator, but as soon as you are getting negative corrections you know your pulses are shorted than the set dead time!

This is one of the obviously wrong deadtime of 330us for the LND7317 tube, where the manufacturer's specs say 40us!

Another outcome of this is that at rather low countrates - CP~50 - as Damien has found out, certain count rates will be missing. This renders an originally Poissonian Distribution to Non-Poissonian one. The data become wrong! On top of that, you are creating counts where none exist!

|

|

|

| Reply #62

Damien68

France

780 Posts |

Posted - 01/29/2021 : 05:51:16 Posted - 01/29/2021 : 05:51:16

|

quote:

Originally posted by ullix

Another outcome of this is that at rather low countrates - CP~50 - as Damien has found out, certain count rates will be missing. This renders an originally Poissonian Distribution to Non-Poissonian one. The data become wrong! On top of that, you are creating counts where none exist!

with DT = 330uS, first missing count is @55 CPS (ie 3300 CPM) so it's quite high, but it's good to know. That also means that under 55 CPS the dead time @ 330uS does not modify anything.

So under 1000-2000 avrage CPM, there is no need to worry about the Dead time |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/29/2021 06:30:52 |

|

|

| Reply #63

ullix

Germany

1242 Posts |

Posted - 01/29/2021 : 06:52:53 Posted - 01/29/2021 : 06:52:53

|

Damien's data for 120us deadtime:

quote:

10kHz : ----- displayed value is truncated but CPS in flash is 52 059 121 (it is CPS value not CPM)

At 10kHz => CPS=10000 the correction for 120us deadtime is -5 (minus 5). Thus the corrected value would be CPS=-50000, and CPM=-3Mio, note the minus at both numbers! I don't know which number types are used in the counter (uint32?, int32?, float?) but I guess these numbers shown are not "truncated" but are the positive representation of an overflow after a non-signed number was multiplied with a negative number.

So, the checking for dead time occurs basically at the wrong end. Not dead-time should be checked, but high count rate!

But this becomes a bit more complicated, because a real tube couldn't deliver any counts beyond that limit of 1/deadtime! Unfortunately, the counter cannot hold that limit, because once the pulses become overlapping, the count rate drops to zero - the counter cannot discriminate where one pulse ends and the next begins.

More realistically is to check some 10%...20% below the 1/deadtime limit countrate.

|

|

|

| Reply #64

ullix

Germany

1242 Posts |

Posted - 01/29/2021 : 07:10:19 Posted - 01/29/2021 : 07:10:19

|

quote:

So under 1000-2000 avrage CPM, there is no need to worry about the Dead time

I am afraid this may not be true, depending on how the calculation is done:

with deadtime 330us, and CPS=6, it should be CPM=360, but corrected CPM=361, so already one extra!

You'll have to round CPS, either up or down; you can't always take floor(CPS), as this would introduce another error!

I would stay away from this kind of correction anyway, and certainly not make it the default. If you have clean CPS or CPM to begin with you can always make the correction afterwards.

|

|

|

| Reply #65

Damien68

France

780 Posts |

Posted - 01/29/2021 : 07:35:19 Posted - 01/29/2021 : 07:35:19

|

quote:

Originally posted by ullix

quote:

So under 1000-2000 avrage CPM, there is no need to worry about the Dead time

I am afraid this may not be true, depending on how the calculation is done:

with deadtime 330us, and CPS=6, it should be CPM=360, but corrected CPM=361, so already one extra!

You'll have to round CPS, either up or down; you can't always take floor(CPS), as this would introduce another error!

I would stay away from this kind of correction anyway, and certainly not make it the default. If you have clean CPS or CPM to begin with you can always make the correction afterwards.

Yes if we make compensation at CPM level. because with the CPM we work with 60 times higher integers, so we have a better granulosity

on 6 CPS, 1 unit is 17% of it's value

on 360 CPM, 1 unit is 0,27% of it's value

EDIT: by rounding by +/- 0.5, we will just shift the +1 transitions to the left, it will be a little more precise for the average but it will not change much

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/29/2021 11:21:13 |

|

|

| Reply #66

Damien68

France

780 Posts |

Posted - 01/29/2021 : 08:00:01 Posted - 01/29/2021 : 08:00:01

|

quote:

Originally posted by ullix

Damien's data for 120us deadtime:

quote:

10kHz : ----- displayed value is truncated but CPS in flash is 52 059 121 (it is CPS value not CPM)

At 10kHz => CPS=10000 the correction for 120us deadtime is -5 (minus 5). Thus the corrected value would be CPS=-50000, and CPM=-3Mio, note the minus at both numbers! I don't know which number types are used in the counter (uint32?, int32?, float?) but I guess these numbers shown are not "truncated" but are the positive representation of an overflow after a non-signed number was multiplied with a negative number.

So, the checking for dead time occurs basically at the wrong end. Not dead-time should be checked, but high count rate!

But this becomes a bit more complicated, because a real tube couldn't deliver any counts beyond that limit of 1/deadtime! Unfortunately, the counter cannot hold that limit, because once the pulses become overlapping, the count rate drops to zero - the counter cannot discriminate where one pulse ends and the next begins.

More realistically is to check some 10%...20% below the 1/deadtime limit countrate.

yes it's pretty special,

in the event of an impossible situation, they must detect it and then return a default value which must be the highest one,

I saw 52059121 in the flash which equals to 0x031A 5BF1, a 32bits CPS coded on 4 Bytes, data flash record format is adaptative, and the CPM on the device screen increases in a way totally crazy with more than 8 or 10 digits. 8,000,000 for example we can read it but after that it goes up again and again and we can no longer read it

But it's ok

to simplify they must do the following:

Calculate once :

CPS_Max = 1000000/deadtime; //deadtime in microsecondes

Before doing this calculation necessarily they check that deadtime != 0

and set a boolean:

deadtime_is_Null = (deadtime ==0);

After in process they only have to do :

If (deadtime_is_Null) return (0) ;

If (cps_obs >= CPS_Max) return (MAXVALUE) ;

return(formula);

it is clear that it would have been a little better and no more complicated to replace

If (deadtime_is_Null) return (0) ;

by

If (deadtime_is_Null) return (cps_obs) ;

|

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/30/2021 10:01:44 |

|

|

| Reply #67

Damien68

France

780 Posts |

Posted - 01/29/2021 : 08:46:37 Posted - 01/29/2021 : 08:46:37

|

The dead time is only a modeling, we often talk about minimum dead time, or dead time @ 400v for SBM-20 tubes.

the deadtime must depend on a lot of factors.

If in theory we cannot have CPS more than (1s / deadtime), in practice this can happen.

I don't know exacly which causes the deadtime of the tube, voltaic reloading of the tube does factor, but I feel it's not just that.

for very high detections levels we are talking about pumping the tube, I don't really know what it is.

but we cannot have superposition of the pulses because to trigg a new pulse, the tube must be charged minimum at plateau level. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/29/2021 09:16:02 |

|

|

| Reply #68

Damien68

France

780 Posts |

Posted - 01/29/2021 : 09:43:02 Posted - 01/29/2021 : 09:43:02

|

other solution:

#define MAXVALUE 0xFFFFFFFF

#define MAXVALUE2 0xFFFFFFFF

uint32_t CPS_Max;

Calculate once :

if(deadtime==0){

CPS_Max = MAXVALUE;

}else{

CPS_Max = 1000000/deadtime;

}

After in process:

If (cps_obs >= CPS_Max) return (MAXVALUE2) ;

return(formula);

Thats all |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/29/2021 11:19:09 |

|

|

| Reply #69

EmfDev

2403 Posts |

Posted - 01/29/2021 : 11:24:17 Posted - 01/29/2021 : 11:24:17

|

| It is already in the algorithm, max cps is 1000000/deadtime - 1. Its just that deadtime = 0 wasnt taken care of because dead time disable/enable is already there. |

|

|

| Reply #70

Damien68

France

780 Posts |

Posted - 01/29/2021 : 11:50:42 Posted - 01/29/2021 : 11:50:42

|

quote:

Originally posted by EmfDev

It is already in the algorithm, max cps is 1000000/deadtime - 1. Its just that deadtime = 0 wasnt taken care of because dead time disable/enable is already there.

yes it's not very serious issue  |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/29/2021 11:52:49 |

|

|

| Reply #71

EmfDev

2403 Posts |

|

| Reply #72

Damien68

France

780 Posts |

Posted - 01/30/2021 : 00:50:51 Posted - 01/30/2021 : 00:50:51

|

quote:

Originally posted by EmfDev

It is already in the algorithm, max cps is 1000000/deadtime - 1.

exact minus 1 because of the name of the variable is "CPS_max".

if it will be called "CPS_crashdown_minimum_value" my formula would have been good  |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/30/2021 01:55:57 |

|

|

| Reply #73

Damien68

France

780 Posts |

|

| Reply #74

ullix

Germany

1242 Posts |

Posted - 01/30/2021 : 02:15:15 Posted - 01/30/2021 : 02:15:15

|

@EmfDev:

quote:

cps is 1000000/deadtime - 1

What are the units in this thing? Assuming deadtime in microsecond and cps in second I get

1000000 / 330E-6 -1 = 3030303030 -1

That seems meaningless?

But an approach of a fixed upper value for everything is a wrong concept anyway. This upper limit must be made specific to the deadtime set in the counter.

The proper approach would be this:

1.) Decide what correction factor to allow

When the correction is several times the signal, you are typically in nonsense-land. I would set the limit to 30%, so the correction factor to cf = 1.3

2.) Now determine the max countrate which would result in this correction factor. At this level the counter should only show "Hi Alert" (or similar), but not give out numbers.

This countrate is easily determined: From true = obs / (1 - obs * dtime) simply set true = cf * obs, and you can solve for obs:

true = obs / (1 - obs * dtime)

with true = cf * obs:

cf * obs = obs / (1 - obs * dtime)

cf = 1 / (1 - obs * dtime)

and finally:

obs = (cf - 1) / (dt * cf)

Examples: for obs = CPSmax

cf = 1.3 dt = 330us => CPSmax = 699 (CPMmax = 41940)

cf = 1.3 dt = 120us => CPSmax = 1923 (CPMmax = 115380)

cf = 1.3 dt = 50us => CPSmax = 4615 (CPMmax = 276900)

|

|

|

| Reply #75

Damien68

France

780 Posts |

Posted - 01/30/2021 : 02:26:55 Posted - 01/30/2021 : 02:26:55

|

quote:

Originally posted by ullix

@EmfDev:

quote:

cps is 1000000/deadtime - 1

What are the units in this thing? Assuming deadtime in microsecond and cps in second I get

1000000 / 330E-6 -1 = 3030303030 -1

That seems meaningless?

in your calculation you entered the deadtime in seconds

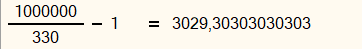

the correct calculation is as follows:

and must be updated if you change the dead time value. |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/30/2021 02:35:33 |

|

|

| Reply #76

Damien68

France

780 Posts |

Posted - 01/30/2021 : 02:32:40 Posted - 01/30/2021 : 02:32:40

|

The correction factor could be limited to avoid stupid situations, but like this, it's not difficult for the user to understand what is going on. normally it should display "OVERLOAD" if it's too big or invalidated.

limiting the correction factor could lead the user into error |

Mastery is acquired by studying, with it everything becomes simple |

Edited by - Damien68 on 01/30/2021 07:32:36 |

|

|

| Reply #77

EmfDev

2403 Posts |

Posted - 02/01/2021 : 12:06:59 Posted - 02/01/2021 : 12:06:59

|

| It is as what Damien68 said 1S/deadtime in Sec = 1/0.0003300 = 1000000/330. The "-1" is to prevent CPS/(1-CPS*.000330) become CPS/(1-1). |

|

|

| Reply #78

ihab17

Italy

240 Posts |

Posted - 01/10/2023 : 09:01:14 Posted - 01/10/2023 : 09:01:14

|

quote:

Originally posted by ullix

The data reminded me of some results obtained more than 2 years back when I investigated how external leads to a tube need to be designed. It turned out that the capacitance of the combined leads-anode-resistor-tube system is a very essential component.

You are a rocking Nuclear Physicist and an Electrical Engineer (even if you may not be one)! Soon Ullix will unleash his wrath and come with an empirical proof of the deficiencies in the implementation of this counter which seems he has nailed with less than 20 eurocents resistor! Thank you for your patience man. YOU ARE A HEROOOOOOOOOOOOOOOOOOOOOOOOO. When I come back home I will come with more details and more data and real readings with my DVM after the introduction of a simple resistor, a more beautiful and ******y Poisson curve emerged... could this be the long awaited GEIGERA????? After all, GEIGERA turned out to be a resistor? D'oh I was hoping for something else, but I'll resist for now!

|

|

|

| |

Topic Topic  |

|